Are you over 18 and want to see adult content?

More Annotations

A complete backup of https://alfath-store.com

Are you over 18 and want to see adult content?

A complete backup of https://cooph.com

Are you over 18 and want to see adult content?

A complete backup of https://pelerin.com

Are you over 18 and want to see adult content?

A complete backup of https://justinelarbalestier.com

Are you over 18 and want to see adult content?

A complete backup of https://bibliotheekmb.nl

Are you over 18 and want to see adult content?

A complete backup of https://iriveramerica.com

Are you over 18 and want to see adult content?

A complete backup of https://webmoney.ru

Are you over 18 and want to see adult content?

A complete backup of https://gerotac.com.co

Are you over 18 and want to see adult content?

A complete backup of https://kazan-stanki.com

Are you over 18 and want to see adult content?

A complete backup of https://decisiongames.com

Are you over 18 and want to see adult content?

A complete backup of https://googlefeud.com

Are you over 18 and want to see adult content?

A complete backup of https://reflexology-usa.org

Are you over 18 and want to see adult content?

Favourite Annotations

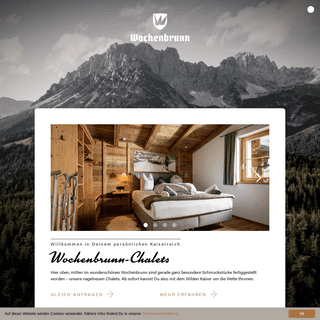

A complete backup of https://wochenbrunn.com

Are you over 18 and want to see adult content?

A complete backup of https://artistmerchant.ru

Are you over 18 and want to see adult content?

A complete backup of https://marrakechalaan.com

Are you over 18 and want to see adult content?

A complete backup of https://virusinfo.info

Are you over 18 and want to see adult content?

A complete backup of https://agensitusjudionline.com

Are you over 18 and want to see adult content?

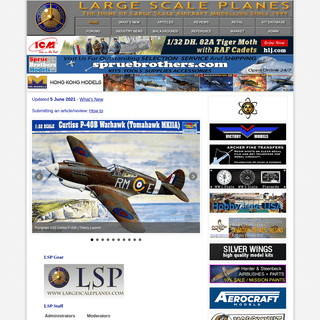

A complete backup of https://largescaleplanes.com

Are you over 18 and want to see adult content?

A complete backup of https://devaka.ru

Are you over 18 and want to see adult content?

A complete backup of https://ossalumni.org

Are you over 18 and want to see adult content?

A complete backup of https://energynet.de

Are you over 18 and want to see adult content?

A complete backup of https://apprenticeships.gov.uk

Are you over 18 and want to see adult content?

A complete backup of https://cpravka78.com

Are you over 18 and want to see adult content?

A complete backup of https://nibletz.com

Are you over 18 and want to see adult content?

Text

This phenomenon is

OFF THE CONVEX PATH

Off the Convex Path Contributors. Sanjeev Arora; Nisheeth Vishnoi; Nadav Cohen; Former contributors: Moritz Hardt; Mission statement. The notion of convexity underlies a lot of beautiful mathematics. BACK-PROPAGATION, AN INTRODUCTION GENERALIZATION THEORY AND DEEP NETS, AN INTRODUCTION Deep learning holds many mysteries for theory, as we have discussed on this blog. Lately many ML theorists have become interested in the generalization mystery: why do trained deep nets perform well on previously unseen data, even though they have way more free parameters than the number of datapoints (the classic “overfitting” regime)? ULTRA-WIDE DEEP NETS AND NEURAL TANGENT KERNEL (NTK) This results in a significant new benchmark for performance of a pure kernel-based method on CIFAR-10, being 10% higher than methods reported by Novak et al. (2019). The CNTKs also perform similarly to their CNN counterparts. This means that ultra-wide CNNs can achieve reasonable test performance on CIFAR-10. AN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX Min-max optimization. Min-max optimization of an objective function. is a powerful framework in optimization, economics, and ML as it allows one to model learning in the presence of multiple agents with competing objectives. In ML applications, such as GANs and adversarial robustness, the min-max objective function may be nonconvex-nonconcave. ESCAPING FROM SADDLE POINTS Convex functions are simple — they usually have only one local minimum. Non-convex functions can be much more complicated. In this post we will discuss various types of critical points that you might encounter when you go off the convex path.In particular, we will see in many cases simple heuristics based on gradient descent can lead you to a local minimum in polynomial time. WHEN ARE NEURAL NETWORKS MORE POWERFUL THAN NEURAL TANGENT Empirically, infinite-width NTK kernel predictors perform slightly worse (though competitive) than fully trained neural networks on benchmark tasks such as CIFAR-10 (Arora et al. 2019b). For finite width networks in practice, this gap is even more profound, as we see in Figure 1: The linearized network is a rather poor approximation ofthe

BEYOND LOG-CONCAVE SAMPLING (PART 2) Beyond log-concave sampling (Part 2) In our previous blog post, we introduced the challenges of sampling distributions beyond log-concavity. We first introduced the problem of sampling from a distibution p (x) \propto e^ {-f (x)} given value or gradient oracle access to f, as an analogous problem to black-box optimization withoracle access.

TENSOR METHODS IN MACHINE LEARNING OFF THE CONVEX PATHABOUTAN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX OPTIMIZATIONCONTACTSUBSCRIBE Understanding optimization in deep learning by analyzing trajectories of gradient descent. Nov 7, 2018 (Nadav Cohen). Neural network optimization is fundamentally non-convex, and yet simple gradient-based algorithms seem to consistently solve such problems.This phenomenon is

OFF THE CONVEX PATH

Off the Convex Path Contributors. Sanjeev Arora; Nisheeth Vishnoi; Nadav Cohen; Former contributors: Moritz Hardt; Mission statement. The notion of convexity underlies a lot of beautiful mathematics. BACK-PROPAGATION, AN INTRODUCTION GENERALIZATION THEORY AND DEEP NETS, AN INTRODUCTION Deep learning holds many mysteries for theory, as we have discussed on this blog. Lately many ML theorists have become interested in the generalization mystery: why do trained deep nets perform well on previously unseen data, even though they have way more free parameters than the number of datapoints (the classic “overfitting” regime)? ULTRA-WIDE DEEP NETS AND NEURAL TANGENT KERNEL (NTK) This results in a significant new benchmark for performance of a pure kernel-based method on CIFAR-10, being 10% higher than methods reported by Novak et al. (2019). The CNTKs also perform similarly to their CNN counterparts. This means that ultra-wide CNNs can achieve reasonable test performance on CIFAR-10. AN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX Min-max optimization. Min-max optimization of an objective function. is a powerful framework in optimization, economics, and ML as it allows one to model learning in the presence of multiple agents with competing objectives. In ML applications, such as GANs and adversarial robustness, the min-max objective function may be nonconvex-nonconcave. ESCAPING FROM SADDLE POINTS Convex functions are simple — they usually have only one local minimum. Non-convex functions can be much more complicated. In this post we will discuss various types of critical points that you might encounter when you go off the convex path.In particular, we will see in many cases simple heuristics based on gradient descent can lead you to a local minimum in polynomial time. WHEN ARE NEURAL NETWORKS MORE POWERFUL THAN NEURAL TANGENT Empirically, infinite-width NTK kernel predictors perform slightly worse (though competitive) than fully trained neural networks on benchmark tasks such as CIFAR-10 (Arora et al. 2019b). For finite width networks in practice, this gap is even more profound, as we see in Figure 1: The linearized network is a rather poor approximation ofthe

BEYOND LOG-CONCAVE SAMPLING (PART 2) Beyond log-concave sampling (Part 2) In our previous blog post, we introduced the challenges of sampling distributions beyond log-concavity. We first introduced the problem of sampling from a distibution p (x) \propto e^ {-f (x)} given value or gradient oracle access to f, as an analogous problem to black-box optimization withoracle access.

TENSOR METHODS IN MACHINE LEARNINGOFF THE CONVEX PATH

Off the Convex Path Contributors. Sanjeev Arora; Nisheeth Vishnoi; Nadav Cohen; Former contributors: Moritz Hardt; Mission statement. The notion of convexity underlies a lot of beautiful mathematics. BEYOND LOG-CONCAVE SAMPLING As the growing number of posts on this blog would suggest, recent years have seen a lot of progress in understanding optimization beyondconvexity.

TRAINING GANS

Training GANs - From Theory to Practice. GANs, originally discovered in the context of unsupervised learning, have had far reaching implications to science, engineering, and society. However, training GANs remains challenging (in part) due to the lack of convergent algorithms for nonconvex-nonconcave min-max optimization. WHEN ARE NEURAL NETWORKS MORE POWERFUL THAN NEURAL TANGENT Empirically, infinite-width NTK kernel predictors perform slightly worse (though competitive) than fully trained neural networks on benchmark tasks such as CIFAR-10 (Arora et al. 2019b). For finite width networks in practice, this gap is even more profound, as we see in Figure 1: The linearized network is a rather poor approximation ofthe

BEYOND LOG-CONCAVE SAMPLING (PART 2) Beyond log-concave sampling (Part 2) In our previous blog post, we introduced the challenges of sampling distributions beyond log-concavity. We first introduced the problem of sampling from a distibution p (x) \propto e^ {-f (x)} given value or gradient oracle access to f, as an analogous problem to black-box optimization withoracle access.

HOW TO ALLOW DEEP LEARNING ON YOUR DATA WITHOUT REVEALING This is a modern and rigorous version of classic data anonymization techniques whose canonical application is release of noised census data to protect privacy of individuals. This notion was adapted to machine learning by positing that “privacy” in machine learning refers to trained classifiers not being dependent on data ofindividuals.

EXPONENTIAL LEARNING RATE SCHEDULES FOR DEEP LEARNING This blog post concerns our ICLR20 paper on a surprising discovery about learning rate (LR), the most basic hyperparameter in deep learning.. As illustrated in many online blogs, setting LR too small might slow down the optimization, and setting it too large might make the network overshoot the area of low losses. CONTRASTIVE UNSUPERVISED LEARNING OF SEMANTIC Algorithms off the convex path. Sanjeev Arora, Hrishikesh Khandeparkar, Orestis Plevrakis, Nikunj Saunshi • Mar 19, 2019 •20 minute read

GENERATIVE ADVERSARIAL NETWORKS (GANS), SOME OPEN This post describes GANs and raises some open questions about them. The next post will describe our recent paper addressing these questions. A generative model G can be seen as taking a random seed h (say, a sample from a multivariate Normal distribution) and converting it into an output string G (h) that “looks” like a real datapoint. LANDSCAPE CONNECTIVITY OF LOW COST SOLUTIONS FOR Landscape Connectivity of Low Cost Solutions for Multilayer Nets. A big mystery about deep learning is how, in a highly nonconvex loss landscape, gradient descent often finds near-optimal solutions —those with training cost almost zero— even starting from a random initialization. This conjures an image of a landscape filled with deeppits.

OFF THE CONVEX PATHABOUTAN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX OPTIMIZATIONCONTACTSUBSCRIBE Understanding optimization in deep learning by analyzing trajectories of gradient descent. Nov 7, 2018 (Nadav Cohen). Neural network optimization is fundamentally non-convex, and yet simple gradient-based algorithms seem to consistently solve such problems.This phenomenon is

ULTRA-WIDE DEEP NETS AND NEURAL TANGENT KERNEL (NTK) This results in a significant new benchmark for performance of a pure kernel-based method on CIFAR-10, being 10% higher than methods reported by Novak et al. (2019). The CNTKs also perform similarly to their CNN counterparts. This means that ultra-wide CNNs can achieve reasonable test performance on CIFAR-10. AN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX Min-max optimization. Min-max optimization of an objective function. is a powerful framework in optimization, economics, and ML as it allows one to model learning in the presence of multiple agents with competing objectives. In ML applications, such as GANs and adversarial robustness, the min-max objective function may be nonconvex-nonconcave. BACK-PROPAGATION, AN INTRODUCTION SAY HELLO – OFF THE CONVEX PATH Say Hello. Before mailing us, consider leaving a comment on any of ourposts.

RIP VAN WINKLE'S RAZOR, A SIMPLE NEW ESTIMATE FOR ADAPTIVE This blog post concerns our new paper, which gives meaningful upper bounds on this sort of trouble for popular deep net architectures, whereas prior ideas from adaptive data analysis gave no nontrivial estimates. We call our estimate Rip van Winkle’s Razor which combines references to Occam’s Razor and the mythical person whofell asleep

GENERALIZATION THEORY AND DEEP NETS, AN INTRODUCTION Deep learning holds many mysteries for theory, as we have discussed on this blog. Lately many ML theorists have become interested in the generalization mystery: why do trained deep nets perform well on previously unseen data, even though they have way more free parameters than the number of datapoints (the classic “overfitting” regime)? ESCAPING FROM SADDLE POINTS CONTRASTIVE UNSUPERVISED LEARNING OF SEMANTICSEE MORE ON OFFCONVEX.ORG GENERALIZATION AND EQUILIBRIUM IN GENERATIVE ADVERSARIAL The previous post described Generative Adversarial Networks (GANs), a technique for training generative models for image distributions (and other complicated distributions) via a 2-party game between a generator deep net and a discriminator deep net. This post describes my new paper with Rong Ge, Yingyu Liang, Tengyu Ma, and Yi Zhang. We address some fundamental issues about OFF THE CONVEX PATHABOUTAN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX OPTIMIZATIONCONTACTSUBSCRIBE Understanding optimization in deep learning by analyzing trajectories of gradient descent. Nov 7, 2018 (Nadav Cohen). Neural network optimization is fundamentally non-convex, and yet simple gradient-based algorithms seem to consistently solve such problems.This phenomenon is

ULTRA-WIDE DEEP NETS AND NEURAL TANGENT KERNEL (NTK) This results in a significant new benchmark for performance of a pure kernel-based method on CIFAR-10, being 10% higher than methods reported by Novak et al. (2019). The CNTKs also perform similarly to their CNN counterparts. This means that ultra-wide CNNs can achieve reasonable test performance on CIFAR-10. AN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX Min-max optimization. Min-max optimization of an objective function. is a powerful framework in optimization, economics, and ML as it allows one to model learning in the presence of multiple agents with competing objectives. In ML applications, such as GANs and adversarial robustness, the min-max objective function may be nonconvex-nonconcave. BACK-PROPAGATION, AN INTRODUCTION SAY HELLO – OFF THE CONVEX PATH Say Hello. Before mailing us, consider leaving a comment on any of ourposts.

RIP VAN WINKLE'S RAZOR, A SIMPLE NEW ESTIMATE FOR ADAPTIVE This blog post concerns our new paper, which gives meaningful upper bounds on this sort of trouble for popular deep net architectures, whereas prior ideas from adaptive data analysis gave no nontrivial estimates. We call our estimate Rip van Winkle’s Razor which combines references to Occam’s Razor and the mythical person whofell asleep

GENERALIZATION THEORY AND DEEP NETS, AN INTRODUCTION Deep learning holds many mysteries for theory, as we have discussed on this blog. Lately many ML theorists have become interested in the generalization mystery: why do trained deep nets perform well on previously unseen data, even though they have way more free parameters than the number of datapoints (the classic “overfitting” regime)? ESCAPING FROM SADDLE POINTS CONTRASTIVE UNSUPERVISED LEARNING OF SEMANTICSEE MORE ON OFFCONVEX.ORG GENERALIZATION AND EQUILIBRIUM IN GENERATIVE ADVERSARIAL The previous post described Generative Adversarial Networks (GANs), a technique for training generative models for image distributions (and other complicated distributions) via a 2-party game between a generator deep net and a discriminator deep net. This post describes my new paper with Rong Ge, Yingyu Liang, Tengyu Ma, and Yi Zhang. We address some fundamental issues about BACK-PROPAGATION, AN INTRODUCTION Backpropagation gives a fast way to compute the sensitivity of the output of a neural network to all of its parameters while keeping the inputs of the network fixed: specifically it computes all partial derivatives. {\partial f}/ {\partial w_i} where. f. is the output and. BEYOND LOG-CONCAVE SAMPLING As the growing number of posts on this blog would suggest, recent years have seen a lot of progress in understanding optimization beyondconvexity.

SAY HELLO – OFF THE CONVEX PATH Say Hello. Before mailing us, consider leaving a comment on any of ourposts.

SUBSCRIBE TO OFF THE CONVEX PATH Algorithms off the convex path. Subscribe to Off The Convex Path RSS feed. Our RSS feed is located here: RSS feed BEYOND LOG-CONCAVE SAMPLING (PART 3) Beyond log-concave sampling (Part 3) In the first post of this series, we introduced the challenges of sampling distributions beyond log-concavity. In Part 2 we tackled sampling from multimodal distributions: a typical obstacle occuring in problems involving statistical inference and posterior sampling in generative models. Inthis (final) post

WHEN ARE NEURAL NETWORKS MORE POWERFUL THAN NEURAL TANGENT Empirically, infinite-width NTK kernel predictors perform slightly worse (though competitive) than fully trained neural networks on benchmark tasks such as CIFAR-10 (Arora et al. 2019b). For finite width networks in practice, this gap is even more profound, as we see in Figure 1: The linearized network is a rather poor approximation ofthe

GENERALIZATION THEORY AND DEEP NETS, AN INTRODUCTION Deep learning holds many mysteries for theory, as we have discussed on this blog. Lately many ML theorists have become interested in the generalization mystery: why do trained deep nets perform well on previously unseen data, even though they have way more free parameters than the number of datapoints (the classic “overfitting” regime)? BEYOND LOG-CONCAVE SAMPLING (PART 2) Beyond log-concave sampling (Part 2) In our previous blog post, we introduced the challenges of sampling distributions beyond log-concavity. We first introduced the problem of sampling from a distibution p (x) \propto e^ {-f (x)} given value or gradient oracle access to f, as an analogous problem to black-box optimization withoracle access.

CONTRASTIVE UNSUPERVISED LEARNING OF SEMANTIC Algorithms off the convex path. Sanjeev Arora, Hrishikesh Khandeparkar, Orestis Plevrakis, Nikunj Saunshi • Mar 19, 2019 •20 minute read

LANDSCAPE CONNECTIVITY OF LOW COST SOLUTIONS FOR Landscape Connectivity of Low Cost Solutions for Multilayer Nets. A big mystery about deep learning is how, in a highly nonconvex loss landscape, gradient descent often finds near-optimal solutions —those with training cost almost zero— even starting from a random initialization. This conjures an image of a landscape filled with deeppits.

OFF THE CONVEX PATHABOUTAN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX OPTIMIZATIONCONTACTSUBSCRIBE Understanding optimization in deep learning by analyzing trajectories of gradient descent. Nov 7, 2018 (Nadav Cohen). Neural network optimization is fundamentally non-convex, and yet simple gradient-based algorithms seem to consistently solve such problems.This phenomenon is

ULTRA-WIDE DEEP NETS AND NEURAL TANGENT KERNEL (NTK) This results in a significant new benchmark for performance of a pure kernel-based method on CIFAR-10, being 10% higher than methods reported by Novak et al. (2019). The CNTKs also perform similarly to their CNN counterparts. This means that ultra-wide CNNs can achieve reasonable test performance on CIFAR-10. AN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX Min-max optimization. Min-max optimization of an objective function. is a powerful framework in optimization, economics, and ML as it allows one to model learning in the presence of multiple agents with competing objectives. In ML applications, such as GANs and adversarial robustness, the min-max objective function may be nonconvex-nonconcave. BACK-PROPAGATION, AN INTRODUCTION SAY HELLO – OFF THE CONVEX PATH Say Hello. Before mailing us, consider leaving a comment on any of ourposts.

RIP VAN WINKLE'S RAZOR, A SIMPLE NEW ESTIMATE FOR ADAPTIVE This blog post concerns our new paper, which gives meaningful upper bounds on this sort of trouble for popular deep net architectures, whereas prior ideas from adaptive data analysis gave no nontrivial estimates. We call our estimate Rip van Winkle’s Razor which combines references to Occam’s Razor and the mythical person whofell asleep

GENERALIZATION THEORY AND DEEP NETS, AN INTRODUCTION Deep learning holds many mysteries for theory, as we have discussed on this blog. Lately many ML theorists have become interested in the generalization mystery: why do trained deep nets perform well on previously unseen data, even though they have way more free parameters than the number of datapoints (the classic “overfitting” regime)? ESCAPING FROM SADDLE POINTS CONTRASTIVE UNSUPERVISED LEARNING OF SEMANTICSEE MORE ON OFFCONVEX.ORG GENERALIZATION AND EQUILIBRIUM IN GENERATIVE ADVERSARIAL The previous post described Generative Adversarial Networks (GANs), a technique for training generative models for image distributions (and other complicated distributions) via a 2-party game between a generator deep net and a discriminator deep net. This post describes my new paper with Rong Ge, Yingyu Liang, Tengyu Ma, and Yi Zhang. We address some fundamental issues about OFF THE CONVEX PATHABOUTAN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX OPTIMIZATIONCONTACTSUBSCRIBE Understanding optimization in deep learning by analyzing trajectories of gradient descent. Nov 7, 2018 (Nadav Cohen). Neural network optimization is fundamentally non-convex, and yet simple gradient-based algorithms seem to consistently solve such problems.This phenomenon is

ULTRA-WIDE DEEP NETS AND NEURAL TANGENT KERNEL (NTK) This results in a significant new benchmark for performance of a pure kernel-based method on CIFAR-10, being 10% higher than methods reported by Novak et al. (2019). The CNTKs also perform similarly to their CNN counterparts. This means that ultra-wide CNNs can achieve reasonable test performance on CIFAR-10. AN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX Min-max optimization. Min-max optimization of an objective function. is a powerful framework in optimization, economics, and ML as it allows one to model learning in the presence of multiple agents with competing objectives. In ML applications, such as GANs and adversarial robustness, the min-max objective function may be nonconvex-nonconcave. BACK-PROPAGATION, AN INTRODUCTION SAY HELLO – OFF THE CONVEX PATH Say Hello. Before mailing us, consider leaving a comment on any of ourposts.

RIP VAN WINKLE'S RAZOR, A SIMPLE NEW ESTIMATE FOR ADAPTIVE This blog post concerns our new paper, which gives meaningful upper bounds on this sort of trouble for popular deep net architectures, whereas prior ideas from adaptive data analysis gave no nontrivial estimates. We call our estimate Rip van Winkle’s Razor which combines references to Occam’s Razor and the mythical person whofell asleep

GENERALIZATION THEORY AND DEEP NETS, AN INTRODUCTION Deep learning holds many mysteries for theory, as we have discussed on this blog. Lately many ML theorists have become interested in the generalization mystery: why do trained deep nets perform well on previously unseen data, even though they have way more free parameters than the number of datapoints (the classic “overfitting” regime)? ESCAPING FROM SADDLE POINTS CONTRASTIVE UNSUPERVISED LEARNING OF SEMANTICSEE MORE ON OFFCONVEX.ORG GENERALIZATION AND EQUILIBRIUM IN GENERATIVE ADVERSARIAL The previous post described Generative Adversarial Networks (GANs), a technique for training generative models for image distributions (and other complicated distributions) via a 2-party game between a generator deep net and a discriminator deep net. This post describes my new paper with Rong Ge, Yingyu Liang, Tengyu Ma, and Yi Zhang. We address some fundamental issues about BACK-PROPAGATION, AN INTRODUCTION Backpropagation gives a fast way to compute the sensitivity of the output of a neural network to all of its parameters while keeping the inputs of the network fixed: specifically it computes all partial derivatives. {\partial f}/ {\partial w_i} where. f. is the output and. BEYOND LOG-CONCAVE SAMPLING As the growing number of posts on this blog would suggest, recent years have seen a lot of progress in understanding optimization beyondconvexity.

SAY HELLO – OFF THE CONVEX PATH Say Hello. Before mailing us, consider leaving a comment on any of ourposts.

SUBSCRIBE TO OFF THE CONVEX PATH Algorithms off the convex path. Subscribe to Off The Convex Path RSS feed. Our RSS feed is located here: RSS feed BEYOND LOG-CONCAVE SAMPLING (PART 3) Beyond log-concave sampling (Part 3) In the first post of this series, we introduced the challenges of sampling distributions beyond log-concavity. In Part 2 we tackled sampling from multimodal distributions: a typical obstacle occuring in problems involving statistical inference and posterior sampling in generative models. Inthis (final) post

WHEN ARE NEURAL NETWORKS MORE POWERFUL THAN NEURAL TANGENT Empirically, infinite-width NTK kernel predictors perform slightly worse (though competitive) than fully trained neural networks on benchmark tasks such as CIFAR-10 (Arora et al. 2019b). For finite width networks in practice, this gap is even more profound, as we see in Figure 1: The linearized network is a rather poor approximation ofthe

GENERALIZATION THEORY AND DEEP NETS, AN INTRODUCTION Deep learning holds many mysteries for theory, as we have discussed on this blog. Lately many ML theorists have become interested in the generalization mystery: why do trained deep nets perform well on previously unseen data, even though they have way more free parameters than the number of datapoints (the classic “overfitting” regime)? BEYOND LOG-CONCAVE SAMPLING (PART 2) Beyond log-concave sampling (Part 2) In our previous blog post, we introduced the challenges of sampling distributions beyond log-concavity. We first introduced the problem of sampling from a distibution p (x) \propto e^ {-f (x)} given value or gradient oracle access to f, as an analogous problem to black-box optimization withoracle access.

CONTRASTIVE UNSUPERVISED LEARNING OF SEMANTIC Algorithms off the convex path. Sanjeev Arora, Hrishikesh Khandeparkar, Orestis Plevrakis, Nikunj Saunshi • Mar 19, 2019 •20 minute read

LANDSCAPE CONNECTIVITY OF LOW COST SOLUTIONS FOR Landscape Connectivity of Low Cost Solutions for Multilayer Nets. A big mystery about deep learning is how, in a highly nonconvex loss landscape, gradient descent often finds near-optimal solutions —those with training cost almost zero— even starting from a random initialization. This conjures an image of a landscape filled with deeppits.

OFF THE CONVEX PATHABOUTAN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX OPTIMIZATIONCONTACTSUBSCRIBE Understanding optimization in deep learning by analyzing trajectories of gradient descent. Nov 7, 2018 (Nadav Cohen). Neural network optimization is fundamentally non-convex, and yet simple gradient-based algorithms seem to consistently solve such problems.This phenomenon is

OFF THE CONVEX PATH

The notion of convexity underlies a lot of beautiful mathematics. When combined with computation, it gives rise to the area of convex optimization that has had a huge impact on understanding and improving the world we live in. However, convexity does not provide all the answers. Many procedures in statistics, machine learning and nature at ULTRA-WIDE DEEP NETS AND NEURAL TANGENT KERNEL (NTK) This results in a significant new benchmark for performance of a pure kernel-based method on CIFAR-10, being 10% higher than methods reported by Novak et al. (2019). The CNTKs also perform similarly to their CNN counterparts. This means that ultra-wide CNNs can achieve reasonable test performance on CIFAR-10. GENERALIZATION THEORY AND DEEP NETS, AN INTRODUCTION Deep learning holds many mysteries for theory, as we have discussed on this blog. Lately many ML theorists have become interested in the generalization mystery: why do trained deep nets perform well on previously unseen data, even though they have way more free parameters than the number of datapoints (the classic “overfitting” regime)? BACK-PROPAGATION, AN INTRODUCTION SUBSCRIBE TO OFF THE CONVEX PATH Algorithms off the convex path. Subscribe to Off The Convex Path RSS feed. Our RSS feed is located here: RSS feed WHEN ARE NEURAL NETWORKS MORE POWERFUL THAN NEURAL TANGENT Empirically, infinite-width NTK kernel predictors perform slightly worse (though competitive) than fully trained neural networks on benchmark tasks such as CIFAR-10 (Arora et al. 2019b). For finite width networks in practice, this gap is even more profound, as we see in Figure 1: The linearized network is a rather poor approximation ofthe

SAY HELLO – OFF THE CONVEX PATH Say Hello. Before mailing us, consider leaving a comment on any of ourposts.

TENSOR METHODS IN MACHINE LEARNING IS OPTIMIZATION A SUFFICIENT LANGUAGE FOR UNDERSTANDING Though the above settings are simple, they suggest that to understand deep learning we have to go beyond the Conventional View of optimization, which focuses only on the value of the objective and the rate of convergence. (1): Different optimization strategies —GD, SGD, Adam, AdaGrad etc. —-lead to different learning algorithms. OFF THE CONVEX PATHABOUTAN EQUILIBRIUM IN NONCONVEX-NONCONCAVE MIN-MAX OPTIMIZATIONCONTACTSUBSCRIBE Understanding optimization in deep learning by analyzing trajectories of gradient descent. Nov 7, 2018 (Nadav Cohen). Neural network optimization is fundamentally non-convex, and yet simple gradient-based algorithms seem to consistently solve such problems.This phenomenon is

OFF THE CONVEX PATH

The notion of convexity underlies a lot of beautiful mathematics. When combined with computation, it gives rise to the area of convex optimization that has had a huge impact on understanding and improving the world we live in. However, convexity does not provide all the answers. Many procedures in statistics, machine learning and nature at ULTRA-WIDE DEEP NETS AND NEURAL TANGENT KERNEL (NTK) This results in a significant new benchmark for performance of a pure kernel-based method on CIFAR-10, being 10% higher than methods reported by Novak et al. (2019). The CNTKs also perform similarly to their CNN counterparts. This means that ultra-wide CNNs can achieve reasonable test performance on CIFAR-10. GENERALIZATION THEORY AND DEEP NETS, AN INTRODUCTION Deep learning holds many mysteries for theory, as we have discussed on this blog. Lately many ML theorists have become interested in the generalization mystery: why do trained deep nets perform well on previously unseen data, even though they have way more free parameters than the number of datapoints (the classic “overfitting” regime)? BACK-PROPAGATION, AN INTRODUCTION SUBSCRIBE TO OFF THE CONVEX PATH Algorithms off the convex path. Subscribe to Off The Convex Path RSS feed. Our RSS feed is located here: RSS feed WHEN ARE NEURAL NETWORKS MORE POWERFUL THAN NEURAL TANGENT Empirically, infinite-width NTK kernel predictors perform slightly worse (though competitive) than fully trained neural networks on benchmark tasks such as CIFAR-10 (Arora et al. 2019b). For finite width networks in practice, this gap is even more profound, as we see in Figure 1: The linearized network is a rather poor approximation ofthe

SAY HELLO – OFF THE CONVEX PATH Say Hello. Before mailing us, consider leaving a comment on any of ourposts.

TENSOR METHODS IN MACHINE LEARNING IS OPTIMIZATION A SUFFICIENT LANGUAGE FOR UNDERSTANDING Though the above settings are simple, they suggest that to understand deep learning we have to go beyond the Conventional View of optimization, which focuses only on the value of the objective and the rate of convergence. (1): Different optimization strategies —GD, SGD, Adam, AdaGrad etc. —-lead to different learning algorithms.OFF THE CONVEX PATH

The notion of convexity underlies a lot of beautiful mathematics. When combined with computation, it gives rise to the area of convex optimization that has had a huge impact on understanding and improving the world we live in. However, convexity does not provide all the answers. Many procedures in statistics, machine learning and nature at CONTRIBUTING AN ARTICLE The post would appear like this on the web. If you need more than what is in the above example, check out this kramdown reference.You may also use any valid HTML tag in WHEN ARE NEURAL NETWORKS MORE POWERFUL THAN NEURAL TANGENT Empirically, infinite-width NTK kernel predictors perform slightly worse (though competitive) than fully trained neural networks on benchmark tasks such as CIFAR-10 (Arora et al. 2019b). For finite width networks in practice, this gap is even more profound, as we see in Figure 1: The linearized network is a rather poor approximation ofthe

BEYOND LOG-CONCAVE SAMPLING As the growing number of posts on this blog would suggest, recent years have seen a lot of progress in understanding optimization beyondconvexity.

RIP VAN WINKLE'S RAZOR, A SIMPLE NEW ESTIMATE FOR ADAPTIVE This blog post concerns our new paper, which gives meaningful upper bounds on this sort of trouble for popular deep net architectures, whereas prior ideas from adaptive data analysis gave no nontrivial estimates. We call our estimate Rip van Winkle’s Razor which combines references to Occam’s Razor and the mythical person whofell asleep

EXPONENTIAL LEARNING RATE SCHEDULES FOR DEEP LEARNING Exponential Learning Rate Schedules for Deep Learning (Part 1) Zhiyuan Li and Sanjeev Arora • Apr 24, 2020 • 11 minute read. This blog post concerns our ICLR20 paper on a surprising discovery about learning rate (LR), the most basic hyperparameter in deep learning. As illustrated in many online blogs, setting LR too small might slow down CONTRASTIVE UNSUPERVISED LEARNING OF SEMANTIC Algorithms off the convex path. Sanjeev Arora, Hrishikesh Khandeparkar, Orestis Plevrakis, Nikunj Saunshi • Mar 19, 2019 •20 minute read

GENERATIVE ADVERSARIAL NETWORKS (GANS), SOME OPEN Algorithms off the convex path. Since ability to generate “realistic-looking” data may be a step towards understanding its structure and exploiting it, generative models are an important component of unsupervised learning, which has been a frequent theme onthis blog.

IS OPTIMIZATION A SUFFICIENT LANGUAGE FOR UNDERSTANDING Though the above settings are simple, they suggest that to understand deep learning we have to go beyond the Conventional View of optimization, which focuses only on the value of the objective and the rate of convergence. (1): Different optimization strategies —GD, SGD, Adam, AdaGrad etc. —-lead to different learning algorithms. LANDSCAPE CONNECTIVITY OF LOW COST SOLUTIONS FOR Landscape Connectivity of Low Cost Solutions for Multilayer Nets. A big mystery about deep learning is how, in a highly nonconvex loss landscape, gradient descent often finds near-optimal solutions —those with training cost almost zero— even starting from a random initialization. This conjures an image of a landscape filled with deeppits.

About Contact

Subscribe

ULTRA-WIDE DEEP NETS AND NEURAL TANGENT KERNEL (NTK) Oct 3, 2019 (Wei Hu and Simon Du). (Crossposted at CMU ML.) Traditional wisdom in machine learning holds that there is a careful trade-off between training error and... Continue UNDERSTANDING IMPLICIT REGULARIZATION IN DEEP LEARNING BY ANALYZING TRAJECTORIES OF GRADIENT DESCENT Jul 10, 2019 (Nadav Cohen and Wei Hu). Sanjeev’s recent blog post suggested that the conventional view of optimization is insufficient for understanding deep learning, as the value... Continue LANDSCAPE CONNECTIVITY OF LOW COST SOLUTIONS FOR MULTILAYER NETS Jun 16, 2019 (Rong Ge). A big mystery about deep learning is how, in a highly nonconvex loss landscape, gradient descent often finds near-optimal solutions... Continue IS OPTIMIZATION A SUFFICIENT LANGUAGE FOR UNDERSTANDING DEEPLEARNING?

Jun 3, 2019 (Sanjeev Arora). In this Deep Learning era, machine learning usually boils down to defining a suitable objective/cost function for the learning task... Continue CONTRASTIVE UNSUPERVISED LEARNING OF SEMANTIC REPRESENTATIONS: A THEORETICAL FRAMEWORK Mar 19, 2019 (Sanjeev Arora, Hrishikesh Khandeparkar, Orestis Plevrakis, Nikunj Saunshi). Semantic representations (aka semantic embeddings) of complicated data types (e.g. images, text, video) have become central in machine learning, and... Continue THE SEARCH FOR BIOLOGICALLY PLAUSIBLE NEURAL COMPUTATION: A SIMILARITY-BASED APPROACH Dec 3, 2018 (Cengiz Pehlevan and Dmitri "Mitya" Chklovskii). This is the second post in a series reviewing recent progress in designing artificial neural networks (NNs) that resemble natural... Continue UNDERSTANDING OPTIMIZATION IN DEEP LEARNING BY ANALYZING TRAJECTORIESOF GRADIENT DESCENT

Nov 7, 2018 (Nadav Cohen). Neural network optimization is fundamentally non-convex, and yet simple gradient-based algorithms seem to consistently solve such problems. This phenomenon is...Continue

SIMPLE AND EFFICIENT SEMANTIC EMBEDDINGS FOR RARE WORDS, N-GRAMS, ANDLANGUAGE FEATURES

Sep 18, 2018 (Sanjeev Arora, Mikhail Khodak, Nikunj Saunshi). Distributional methods for capturing meaning, such as word embeddings, often require observing many examples of words in context. But most...Continue

WHEN RECURRENT MODELS DON'T NEED TO BE RECURRENT Jul 27, 2018 (John Miller). In the last few years, deep learning practitioners have proposed a litany of different sequence models. Although recurrent neural networks... Continue DEEP-LEARNING-FREE TEXT AND SENTENCE EMBEDDING, PART 2 Jun 25, 2018 (Sanjeev Arora, Mikhail Khodak, Nikunj Saunshi). This post continues Sanjeev’s post and describes further attempts to construct elementary and interpretable text embeddings. The previous post described... Continue DEEP-LEARNING-FREE TEXT AND SENTENCE EMBEDDING, PART 1 Jun 17, 2018 (Sanjeev Arora). Word embeddings (see my old post1 and post2) capture the idea that one can express “meaning” of words using a... Continue LIMITATIONS OF ENCODER-DECODER GAN ARCHITECTURES Mar 12, 2018 (Sanjeev Arora and Andrej Risteski). This is yet another post about Generative Adversarial Nets (GANs), and based upon our new ICLR’18 paper with Yi Zhang.... Continue CAN INCREASING DEPTH SERVE TO ACCELERATE OPTIMIZATION? Mar 2, 2018 (Nadav Cohen). “How does depth help?” is a fundamental question in the theory of deep learning. Conventional wisdom, backed by theoretical studies... Continue PROVING GENERALIZATION OF DEEP NETS VIA COMPRESSION Feb 17, 2018 (Sanjeev Arora). This post is about my new paper with Rong Ge, Behnam Neyshabur, and Yi Zhang which offers some new perspective... Continue GENERALIZATION THEORY AND DEEP NETS, AN INTRODUCTION Dec 8, 2017 (Sanjeev Arora). Deep learning holds many mysteries for theory, as we have discussed on this blog. Lately many ML theorists have become... Continue HOW TO ESCAPE SADDLE POINTS EFFICIENTLY Jul 19, 2017 (Chi Jin and Michael Jordan). A core, emerging problem in nonconvex optimization involves the escape of saddle points. While recent research has shown that gradient... Continue DO GANS ACTUALLY DO DISTRIBUTION LEARNING? Jul 6, 2017 (Sanjeev Arora and Yi Zhang). This post is about our new paper, which presents empirical evidence that current GANs (Generative Adversarial Nets) are quite far... Continue UNSUPERVISED LEARNING, ONE NOTION OR MANY? Jun 26, 2017 (Sanjeev Arora and Andrej Risteski). Unsupervised learning, as the name suggests, is the science of learning from unlabeled data. A look at the wikipedia page... Continue GENERALIZATION AND EQUILIBRIUM IN GENERATIVE ADVERSARIAL NETWORKS(GANS)

Mar 30, 2017 (Sanjeev Arora). The previous post described Generative Adversarial Networks (GANs), a technique for training generative models for image distributions (and other complicated...Continue

GENERATIVE ADVERSARIAL NETWORKS (GANS), SOME OPEN QUESTIONS Mar 15, 2017 (Sanjeev Arora). Since ability to generate “realistic-looking” data may be a step towards understanding its structure and exploiting it, generative models are... Continue BACK-PROPAGATION, AN INTRODUCTION Dec 20, 2016 (Sanjeev Arora and Tengyu Ma). Given the sheer number of backpropagation tutorials on the internet, is there really need for another? One of us (Sanjeev)... Continue THE SEARCH FOR BIOLOGICALLY PLAUSIBLE NEURAL COMPUTATION: THE CONVENTIONAL APPROACH Nov 3, 2016 (Dmitri "Mitya" Chklovskii). Inventors of the original artificial neural networks (NNs) derived their inspiration from biology. However, as artificial NNs progressed, their design...Continue

GRADIENT DESCENT LEARNS LINEAR DYNAMICAL SYSTEMS Oct 13, 2016 (Moritz Hardt and Tengyu Ma). From text translation to video captioning, learning to map one sequence to another is an increasingly active research area in... Continue LINEAR ALGEBRAIC STRUCTURE OF WORD MEANINGS Jul 10, 2016 (Sanjeev Arora). Word embeddings capture the meaning of a word using a low-dimensional vector and are ubiquitous in natural language processing (NLP).... Continue A FRAMEWORK FOR ANALYSING NON-CONVEX OPTIMIZATION May 8, 2016 (Sanjeev Arora, Tengyu Ma). Previously Rong’s post and Ben’s post show that (noisy) gradient descent can converge to local minimum of a non-convex function,... Continue MARKOV CHAINS THROUGH THE LENS OF DYNAMICAL SYSTEMS: THE CASE OFEVOLUTION

Apr 4, 2016 (Nisheeth Vishnoi). In this post, we will see the main technical ideas in the analysis of the mixing time of evolutionary Markov... ContinueSADDLES AGAIN

Mar 24, 2016 (Benjamin Recht). Thanks to Rong for the very nice blog post describing critical points of nonconvex functions and how to avoid them.... Continue ESCAPING FROM SADDLE POINTS Mar 22, 2016 (Rong Ge). Convex functions are simple — they usually have only one local minimum. Non-convex functions can be much more complicated. In... Continue STABILITY AS A FOUNDATION OF MACHINE LEARNING Mar 14, 2016 (Moritz Hardt). Central to machine learning is our ability to relate how a learning algorithm fares on a sample to its performance... Continue EVOLUTION, DYNAMICAL SYSTEMS AND MARKOV CHAINS Mar 7, 2016 (Nisheeth Vishnoi). In this post we present a high level introduction to evolution and to how we can use mathematical tools such... Continue WORD EMBEDDINGS: EXPLAINING THEIR PROPERTIES Feb 14, 2016 (Sanjeev Arora). This is a followup to an earlier post about word embeddings, which capture the meaning of a word usinga... Continue

NIPS 2015 WORKSHOP ON NON-CONVEX OPTIMIZATION Jan 25, 2016 (Anima Anandkumar). While convex analysis has received much attention by the machine learning community, theoretical analysis of non-convex optimization is still nascent.... Continue NATURE, DYNAMICAL SYSTEMS AND OPTIMIZATION Dec 21, 2015 (Nisheeth Vishnoi). The language of dynamical systems is the preferred choice of scientists to model a wide variety of phenomena in nature.... Continue TENSOR METHODS IN MACHINE LEARNING Dec 17, 2015 (Rong Ge). Tensors are high dimensional generalizations of matrices. In recent years tensor decompositions were used to design learning algorithms for estimating... Continue SEMANTIC WORD EMBEDDINGS Dec 12, 2015 (Sanjeev Arora). This post can be seen as an introduction to how nonconvex problems arise naturally in practice, and also the relative... Continue WHY GO OFF THE CONVEX PATH? Dec 11, 2015 (blogmasters). The notion of convexity underlies a lot of beautiful mathematics. When combined with computation, it gives rise to the area... ContinueNewer

Older

Theme available on Github .Details

Copyright © 2024 ArchiveBay.com. All rights reserved. Terms of Use | Privacy Policy | DMCA | 2021 | Feedback | Advertising | RSS 2.0